A drone is an astonishing piece of technology. Majority of the drones nowadays are equipped with cameras that allow you to capture cinematic shots from unique perspectives. You can click a plethora of beautiful images from the sky (from a bird’s perspective) that was not so common earlier.

The cameras that are usually mounted on the drones have become extremely advance with regard to the quality of images captured by them. However, despite high-resolution images captured by the drones, these images are two-dimensional images that lack in-depth information.

For realizing the depth information, the drones must be equipped with two or more cameras. Just like having two eyes gives us an ability to separate a foreground from a background in our field of view, the images from two cameras that are separated from each other can also provide the depth information. This is the reason why smartphone companies are also giving two (or even more) cameras for capturing a depth of field, which is useful for obtaining “bokeh” effect (in portrait mode).

Unlike others, Amazon has come up with a patented technology that realizes the depth information by using a single camera mounted on a propeller blade of the drone. Since a rotational speed that may be associated with the propeller blade is of the order of thousands of rpm (rotation per minute), the single camera mounted on the propeller blade can effectively be present in two locations at once.

The depth information from the drone cameras is utilized for purposes such as for navigation, guidance, surveillance, collision avoidance etc.

PATENTED TECHNOLOGY

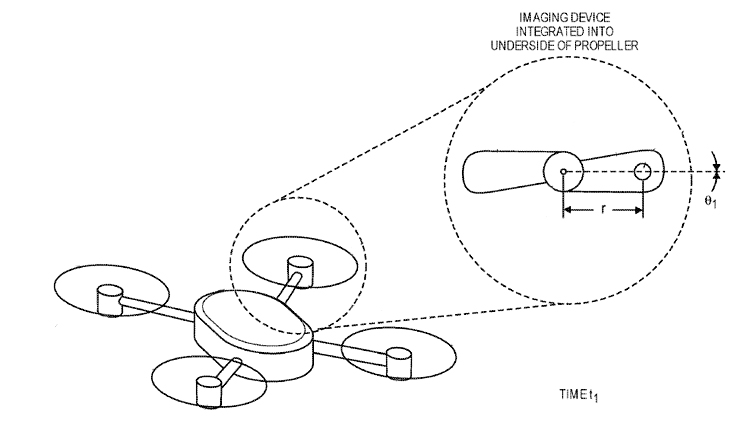

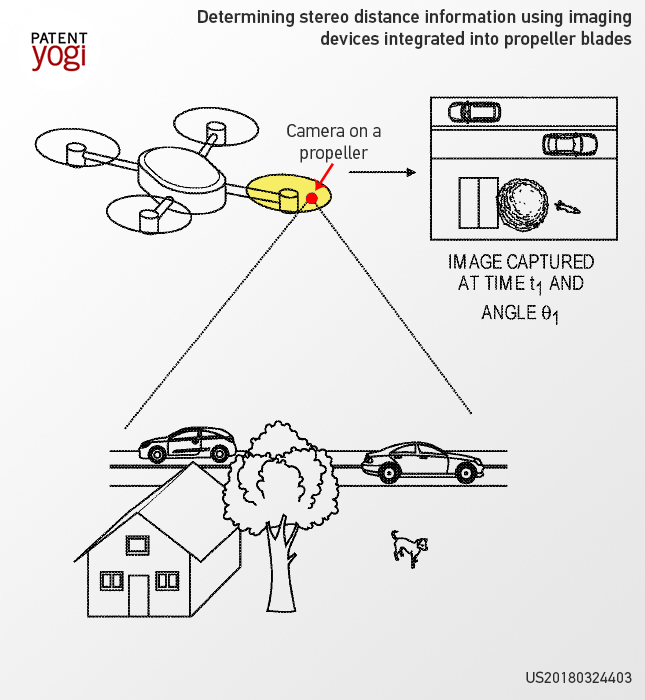

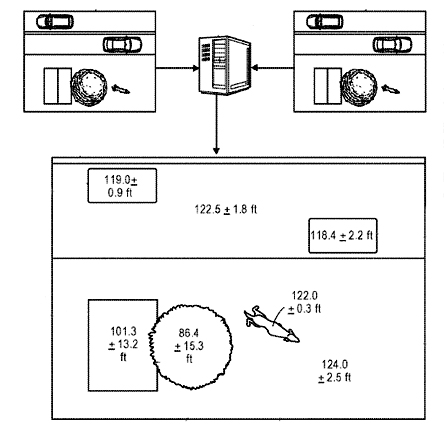

As shown in Figure 1 (above), a camera is embedded in a propeller blade of the drone. The camera will capture the first image of a surrounding scene at a time instant (let’s say at the time instant ‘t1’). The first image captured by the camera at that instant (at ‘t1’) is shown in Figure 2 (below).

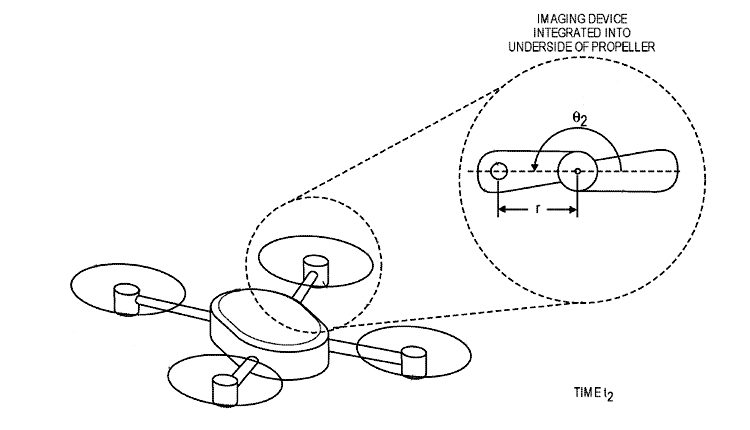

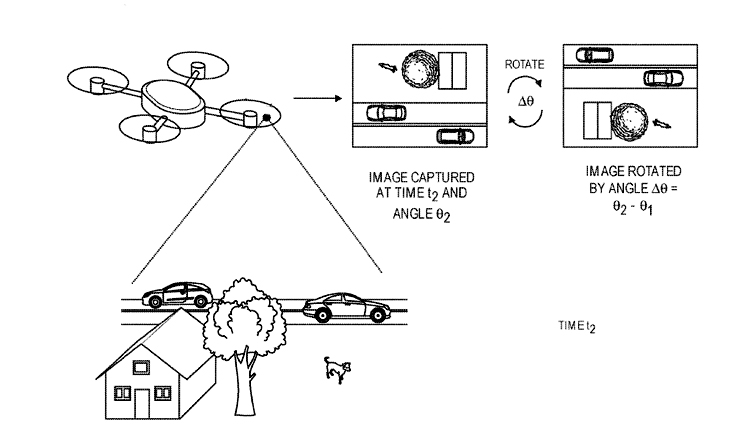

As shown in Figure 3 (above), the camera has now moved to another location at a time instant (say ‘t2’) as the propeller blade of the drone is rotating. Now the camera captures a second image at the time instant t2 (as shown in figure 4 (below)).

Now, before analyzing the first image and the second image captured by the camera at two different time instant (at t1 and t2), the second image is reoriented with respect to the first image.

After the reorientation, as shown in figure 5 (below), the two images (the first image and the second image) are analyzed to identify objects present in the two images and further to determine the depth information associated with the objects. A depth map is then generated as shown in figure 5. The depth map will provide distance data (such as average distance along with a tolerance value) corresponding to the objects captured in the two images. For instance, the depth map will provide ranges corresponding to an automobile (such as 119 feet with 0.9 feet tolerance), or a pet (such as 122 feet with 0.3 feet tolerance) etc.

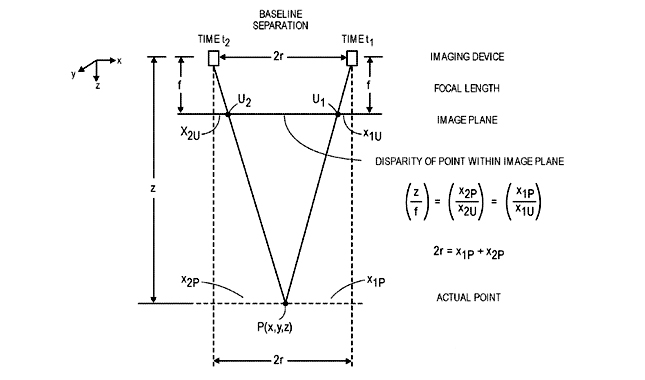

Further, the drone uses traditional geometric principles and properties, e.g., the properties of similar triangles for determining the range data for the depth map from the two images (the first image and the second image) captured by the camera at the time instant t1 and t2. Yet further, by using a mathematical expression as shown in figure 6 (below), a range (z) for a point ‘p’ on the ground can be realized. Moreover, other variables such as baseline distance or separation between the cameras (2r, or twice of a radius of the propeller blades), a disparity between pixels of the two images when the two images are overlapped (X2U – X1U), and a focal length of the camera (f) are known for determining the range (z) to one or more aspects of the object.

WHAT IS YOUR TAKE?

So what do you think about this Patented technology by Amazon? Let us know in the comment section.

Amazon is at the forefront of innovation in drones. Here are some other awesome patents about drones from Amazon.

1. Amazon™ Plans to Build Drone-Ports

2. Noise Abatement System for Drones from Amazon

3. Success of Amazon’s Drone Service Depends On This Patent

4. Amazon invents a universal flying machine…that can lift any sized load

5. Amazon to build “birdhouses” for Drones

Publication Number: US20180324403

Patent Title: Determining Stereo Distance Information Using Imaging Devices Integrated into Propeller Blades

Publication date: 2018-11-08

Filing date: 2018-07-20

Inventors: Scott Patrick Boyd; Barry James O’Brien; Joshua John Watson; Scott Michael Wilcox

Original Assignee: Amazon Technologies, Inc.