It is well known that Uber plans to use Autonomous Vehicles (AVs) in their fleet. In fact, Uber is investing considerable time and resources in developing the technology.

However, it is challenging to use the AVs on the roads. But Uber has chalked out a detailed plan to transition from the current human driven vehicles to fully automated vehicles. A recent patent application from Uber reveals their transition plan in detail.

US Department of Transportation (DoT) provides a taxonomy for defining six levels of vehicle autonomy as per the SAE international’s standard J3016. The six levels of vehicle autonomy range from level-0 (i.e., no driving automation) to level-5 (i.e., full driving automation). Tesla with the Autopilot assist comes under level-2 autonomy (i.e., Partial Automation). A level-5 vehicle will come without any steering wheel, brake pedal or accelerator pedal.

The patent application reveals Uber’s tele-assist AVs that can get you to your destination without a driver on the driver seat. More importantly, Uber’s tele-assist AVs can handle any real-life traffic situation as seamlessly as a vehicle with a driver could. These vehicles quickly learn using intelligent neural networks which will help Uber transition towards level-5 autonomy vehicles.

PATENTED TECHNOLOGY

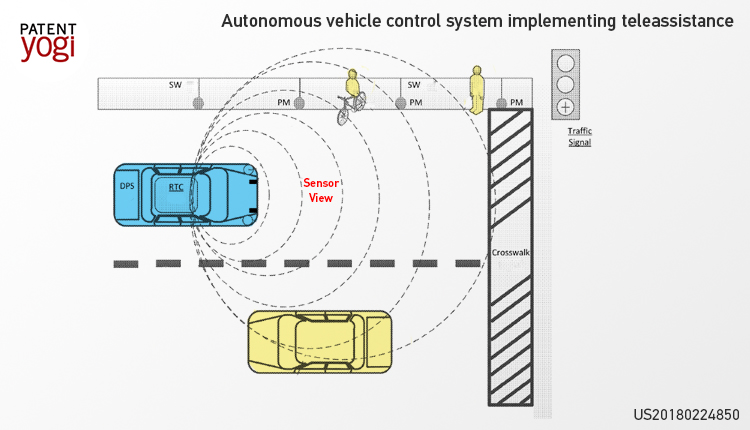

Uber’s AV is packed with a huge number of sensors placed at almost every location in the vehicle, such as front-facing cameras in the headlamps, a roof-top camera, etc. These sensors also include LIDAR, radar, monocular cameras, stereoscopic cameras, sonar, infrared, or other active or passive sensor systems etc. for sensing an environment around the vehicle, as shown in the figure below. Further, the sensor data received from these sensors is used to detect or identify objects such as bicyclists, pedestrians and other vehicles present in a vicinity of the vehicle.

Uber’s AV consists of a tele-assist module that is enabled whenever the vehicle comes under a tele-assist state. The tele-assist state is a state in which the vehicle encounters an anomaly in the vicinity and the vehicle requires human assistance. For instance, the vehicle encounters a crowded pedestrian area or the vehicle encounters a traffic jam due to construction in the area. Under the tele-assist state, Uber’s AV generates a number of decision options in order to tackle the tele-assist state. These decision options correspond to commands such as a wait command, an ignore command, a maneuver command, or an alternate route command. The vehicle shares these decision options with a remotely located human assistant. The human assistant can choose the best decision option (based on the tele-assist state) out of the available decision options. Further, the human assistant can analyze the surrounding area of the vehicle by accessing the sensors to better understand the situation. Thereafter, the decision option selected by the human operator is implemented by the AV by controlling acceleration, braking, and steering systems of the AV.

WHAT IS YOUR TAKE?

So what do you think about this tele-assist technology from Uber? Let us know in the comment section.

Publication Number: US20180224850

Patent Title: Autonomous vehicle control system implementing teleassistance

Publication date: 2018-08-09

Filing date: 2017-02-08

Inventors: Benjamin Kroop, Matthew Way, Robert Sedgewick

Original Assignee: Uber Technologies Inc